Rick Ledbetter is a professional musician and composer based in the US. In this blog post, he talks about his experiences using and programming hearing aids for music, and his advice for other musicians with a hearing loss.

“I have been a musician, a bass player and composer / arranger, for over 50 years, and I have a profound bi lateral hearing loss. I have played professionally in all types of situations from small clubs to arenas, and in recording studios from coast to coast. I have my own computer based music production studio, and I have been programming my own aids for over a decade.

“Around 1989, at a recording session, the engineer told me that he had my headphones up very loud, and suggested I get my hearing tested. The test revealed my worst fear – I was losing my hearing, and subsequent tests revealed an increasing loss. It was harder and harder for me to hear conversation at rehearsals, some soft musical passages were hard to hear, my pitch perception fell, and some musicians got upset with me when I couldn’t hear what they were saying. I began to lose work.

“I bought my first pair of analog aids and they sounded terrible for music – tinny, harsh, and loud. They distorted easily, and they had no low end, so they went straight into the drawer. Then I went to digital, but I encountered the same issues, but at a greater purchase price. My audiologist tried hard, but unsuccessfully, to help me find a setting for live performance. I endured months of “try this and come back in two weeks”. With my background and ability to focus on a particular sound and know its frequency, I could better describe what I heard, and while this made his chore of solving the problems a bit easier, but it still was trial and error. At each visit, I watched him operate the software, and I saw how this was much like digital audio production software. And I wondered why I couldn’t do this myself, so I got the software and interface, and off I went. I learned the software and made improvements in the sound of my aids, using basic music production principles. So far, as my loss has progressed, I have had 5 sets of aids, and I have programmed them all.

“The journey hasn’t been easy. While, for me, the various hearing aid apps were fairly easy to learn, each make of aid had its own set of issues. Some didn’t have enough input stage headroom to handle on stage volume levels, so they produced the nasty, buzzy sound of digital distortion. All of them suffered from over reliance on sound processing: anti-feedback, noise reduction, speech enhancers, environmental adapters, directional microphone switching, and more. All of these adversely affect the sound of music. For one example, anti-feedback does not know the difference between feedback and the sound of a sustained flute. And any type of sound processor that is active, that is, listening and trying to compensate in real time, gets totally confused by music. So, to properly adjust an aid for best music quality, all of that has to be turned off, first.

“Traditionally, aids have a “music” program, a program that usually is a single EQ curve. While this may work for sitting and listening to recorded music, it does not work well for live music or on stage performance because it does not have enough dynamic range to accommodate both music and speech. Musicians need to be able to talk to one another in between playing, and they need a single program to work in all situations. We can’t be distracted by switching programs, so a single program must be created to address our needs.

“I think I have managed fairly well. At least in my case, I have found that reworking the traditional three EQ curve program produces much better results for live music. I could go into detail about this, but that’s another subject. Sometimes I also use a bluetooth wireless device that sends audio directly into my aids. It’s marketed as a TV Streamer. Fortunatley, its input requirements happen to be the same as a mixing desk, so I can use my aids as in the ear monitors, and use the cell phone app to mix between the signal and the sound from the aids’ microphone. A nice thing to have.

“I was asked to include a bit about working with audiologists, but I must be frank: in my experience there are audiologists who don’t know how to fit aids for musicians. So ask your prospective audiologist about their experience fitting for musicians before you buy – choosing the right audiologist is just as important as choosing the right hearing aid. Hearing professionals must understand that our professional reputation, our performance, and our livelihood, not to mention our stress levels, depend on our aids, and they must be right from the beginning. We cannot go through weeks of “try this and see”. We need our aids to work properly from day one.

“The audiologist would ideally have quality sound amplification gear capable of on stage volume levels. Sorry, computer speakers won’t do the job. A real time analyzer is a valuable tool to test the aids’ performance in the ear. A collection of sound samples is handy, but note that recorded music is compressed, so you will not hear the full dynamic range of music samples, but they are still useful. If possible, you should bring your instrument to the office and play it, while adjustments are made, until it sounds right to you.

“But this is highly critical: to properly adjust an aid for best music quality, all of the sound processing must be to be turned off. You cannot get good sound quality if the aids sound processors are active while you are hearing music. For example, anti-feedback thinks a flute is feedback, so it will reduce the volume of a sustained flute note, and “hunt” while the flute is being played, at an attempt to stop what it thinks is feedback. So you will hear treble sounds warble and drop out. Of course, this is unacceptable.

“But let me offer some meantime solutions:

“Miscommunication is a big problem in the process of getting the aids set right. The patient and the audiologist need to establish a common language to describe and understand what the hearing aid wearer is experiencing. To use colour as a comparison example, your definition of red may not be the same as another’s. So if you tell an audiologist, “too screechy” what may get adjusted could be 3000Hz, when what you meant is actually at 1500Hz. Or worse, an adjustment is made without regard to how to various sound processors may be causing the problem, or affecting the adjustment. So a standard language is needed. To that end, a few tools are needed:

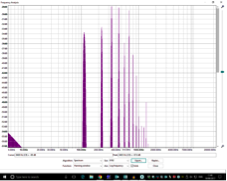

1 – A good bar graph real time analyzer with screenshot capture capability for your cell phone. Most of them have a snapshot feature, so get one that has this. This allows you to save the readout for recall at a later time. The bar graph type is easier to read to determine what frequency and at what volume level to problem occurs. Note that Android phones have a lower audio ceiling than iPhones do, but they still can be relied upon up to 85dB. Many are free, and many are low cost. The professional apps will, of course, give better results at a greater purchase price..

2- A pitch to frequency chart, to translate what is off on your musical instrument into numbers. There are several on the internet, some that lay out a piano, others that include other instruments. Here is a link to one I like:

http://obiaudio.com/eq-chart/

A chart of the frequencies of speech is a thing good to have, too. Audiologists have them.

3 – A list of the frequencies of everyday noisemakers: For instance, a coffee grinder is about 750Hz, dropping a metal fork or spoon into a steel sink is about 1000Hz, flushing the toilet (yes, I’m a Yank) is 500Hz to 750Hz, harsh sibilants is about 4000Hz, the sound of your voice through your aids is about 500Hz.

“The Cell phone Real Time Analyzer takes a lot of guesswork out of the process. It lets you see the frequencies of what you are hearing. When you have problems hearing, open it, and take a readout, then save it. The readout will show what you are hearing at what frequency and how loud it is. Hearing aids have three EQ curves to adjust, each for a different volume level (dB), soft- 50dB, normal – 65dB, and loud -86dB. Is it is very important to know at what volume level something is too loud or too soft, so the audiologist can make the exact adjustment. In other words, while conversation at soft levels may sound just fine to you, music, which is much louder, may not, so it requires adjusting the loud EQ curve.

“The Pitch to frequency charts work great, too. Just sit at a piano and play each note, pay attention to what notes are too loud and which are too soft, and write down those notes. Then look at the chart and translate that to a corresponding frequency. The audiologist can use this information to make the proper adjustments to your aids.

“In conclusion, I hope this article will help to clear up some things. While the technology has greatly improved over the years, a lot of problems still remain to be solved. I trust that the hearing aid business will rise to the challenge and meet the needs of musicians. After all, whatever is learned and addressed will go far towards improving aids for the average user”

RL

If you would like to correspond with Rick, please send us your email and we will forward it to him.

And please do continue to email the project team with your ideas and experiences: [email protected]

Despite being branded as tone deaf in schooldays, and suffering from moderate hearing loss too, seven years ago I started to play trombone. Now, aged 69, I play in a brass band. My wife, Carol, a lifelong musician who plays euphonium is afflicted with a severe hearing loss as well, a long term condition combined with severe tinnitus.

Despite being branded as tone deaf in schooldays, and suffering from moderate hearing loss too, seven years ago I started to play trombone. Now, aged 69, I play in a brass band. My wife, Carol, a lifelong musician who plays euphonium is afflicted with a severe hearing loss as well, a long term condition combined with severe tinnitus. Players should be able to clearly hear neighbouring players, so as to be able to play in time and in tune with one another. They also need to hear other sections of the band to effect the overall tuning and timing of the music. As a trombone player, I need to be able to hear euphonium and baritone horns immediately in front, to perceive the higher pitched sounds of the cornets from the far side of the band, to be aware of the horns, all whilst not forgetting the basses (tubas) which are hard to ignore. And in rehearsal, it is important of course to hear the instructions from the conductor!

Players should be able to clearly hear neighbouring players, so as to be able to play in time and in tune with one another. They also need to hear other sections of the band to effect the overall tuning and timing of the music. As a trombone player, I need to be able to hear euphonium and baritone horns immediately in front, to perceive the higher pitched sounds of the cornets from the far side of the band, to be aware of the horns, all whilst not forgetting the basses (tubas) which are hard to ignore. And in rehearsal, it is important of course to hear the instructions from the conductor! For me, playing without hearing aids is not a realistic option. With my high frequency loss, I am barely aware of the cornet sounds and much of the articulation is lost. The resultant dead and rather woolly musical environment, with no perception of commands from the conductor would preclude participation.

For me, playing without hearing aids is not a realistic option. With my high frequency loss, I am barely aware of the cornet sounds and much of the articulation is lost. The resultant dead and rather woolly musical environment, with no perception of commands from the conductor would preclude participation. Carol uses two Phonak Nathos SP aids with features such as the frequency translation of higher pitched sounds, enabling her to comprehend some of those missing high frequency sounds. Early experience with these aids suggested that she had trouble precisely pitching and playing in tune and this was particularly evident if playing in a small ensemble. Fortunately, the enabling of a music program, disabling some features of the aids, made a dramatic difference and in the small ensemble she was able to play far more reliably in tune. However, in band a major problem unfolded whereby when playing her euphonium, especially when accompanied by a neighbouring euphonium, she could hear virtually nothing of the rest of the band. This problem intrigued me and I set about trying to find why the euphonium was so troublesome!

Carol uses two Phonak Nathos SP aids with features such as the frequency translation of higher pitched sounds, enabling her to comprehend some of those missing high frequency sounds. Early experience with these aids suggested that she had trouble precisely pitching and playing in tune and this was particularly evident if playing in a small ensemble. Fortunately, the enabling of a music program, disabling some features of the aids, made a dramatic difference and in the small ensemble she was able to play far more reliably in tune. However, in band a major problem unfolded whereby when playing her euphonium, especially when accompanied by a neighbouring euphonium, she could hear virtually nothing of the rest of the band. This problem intrigued me and I set about trying to find why the euphonium was so troublesome! All brass instruments have characteristic spectral properties, whereby the fundamental of a note with a particular set of overtones gives the instrument its sound. The different instruments differ in size (from the tiny soprano cornet to the large B-flat tuba) and in construction with the size and degree of taper in the bore. The trombone is a parallel bore instrument, and this reflects in the sound which is rich in overtones – the FFT analysis here shows peaks extending to the 15th or even 20th harmonic of the note being played. Curiously, the trombone can seemingly be very light on the fundamental of the note being played. In contrast, the euphonium with its taper bore is very strong in the fundamental, with the overtones rapidly dying away. The similarly pitched baritone horn with a less tapered bore has a spectrum more like that of the trombone.

All brass instruments have characteristic spectral properties, whereby the fundamental of a note with a particular set of overtones gives the instrument its sound. The different instruments differ in size (from the tiny soprano cornet to the large B-flat tuba) and in construction with the size and degree of taper in the bore. The trombone is a parallel bore instrument, and this reflects in the sound which is rich in overtones – the FFT analysis here shows peaks extending to the 15th or even 20th harmonic of the note being played. Curiously, the trombone can seemingly be very light on the fundamental of the note being played. In contrast, the euphonium with its taper bore is very strong in the fundamental, with the overtones rapidly dying away. The similarly pitched baritone horn with a less tapered bore has a spectrum more like that of the trombone.